In this issue, I’ve consciously tried to balance topical diversity and fun, a little like the exploration-exploitation tradeoff in reinforcement learning. The latter is good for keeping the newsletter going, but it makes me “settle” in certain topics; the former is better for the newsletter in the long run, but short-term sometimes feels like a chore.

Links:

In Soviet Union, Optimization Problem Solves You by Cosma Shalizi (7,800 words, 31 mins)

Three Ways to Advance Science by Nick Bostrom (600 words, 2.5 mins)

Physical intuition from physics experience by Matthew von Hippel (600 words, 2.5 mins)

Bongard problems by David Chapman (5,000 words, 20 mins)

Commoditize your complement by Gwern Branwen (8,000 words, 32 mins)

A reply to Francois Chollet on intelligence explosion by Eliezer Yudkowsky (7,900 words, 31 mins)

Mathematics, morally by Eugenia Cheng (7,000 words, 28 mins)

Modern gospels by Mario Gabriele (4,700 words, 19 mins)

The witch technical interviews (reversing, hexing, typing, rewriting, unifying) by Kyle Kingsbury (13,300 words, 53 mins)

Selfies as a second language by Eugene Wei (1,300 words, 5 mins)

In Soviet Union, Optimization Problem Solves You by Cosma Shalizi (7,800 words, 31 mins): Cosma argues that top-down optimal planning for a socialist economy couldn’t have worked due to computational complexity constraints, even given numerous simplifying assumptions (like linear optimization, and complete accurate knowledge of the economy’s physical constraints/resources/productive capacities i.e. how well inputs get converted to outputs, and settling for approximating optimality).

Three Ways to Advance Science by Nick Bostrom (600 words, 2.5 mins): made me look at thermometers with newfound awe. Bostrom’s 3 ways: (1) Direct: conduct a good study, publish results. Can be significant, but usually useless outside of its domain, e.g. general relativity doesn’t help neuroscientists. (2) Indirect: help others do #1, e.g. journal editors, university admin, research funders; the scientific method, basic statistics, instruments like thermometers and computers, and institutional innovations like the peer-reviewed journal. (3) Combine #1 and #2: a direct advance that expedites advances, e.g. cognitive enhancement. Imagine, says Bostrom, a safe cheap drug that improved cognition by just 1%. Negligible in an individual, but over all the world’s ~10 million scientists the drug’s inventor would’ve advanced science by the equivalent of 100,000 additional scientists, far surpassing even peak Einstein/Darwin!

Physical intuition from physics experience by Matthew von Hippel (600 words, 2.5 mins): there’s a great quote by Richard Feynman underscoring the role of physical intuition in solving physics problems: “as problems get more and more difficult, and as you try to understand nature in more and more complicated situations, the more you can guess at, feel, and understand without actually calculating, the much better off you are!” (This is also the source of much irritation when mathematicians interact with physicists. The former expect formal rigor in reasoning; from this POV the latter often make “unjustified leaps” in arguments.) But it works; Ed Witten for instance used it to do work in knot theory that won him the Fields Medal. But how? Matthew (who goes by 4 gravitons online) walks through the example of a talk on effective field theories given by “a master of physical intuition” to make 3 points: (1) it comes from experience: you need to have seen problems with similar math structure before; (2) it doesn’t replace calculation, but it inspires new solutions and helps remember what we already know, because it’s narrative-like; (3) it can lead you astray, so definitely check by calculating!

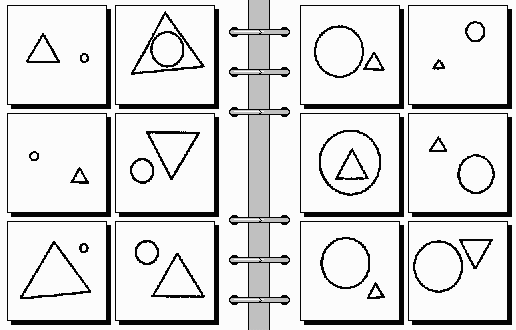

Bongard problems by David Chapman (5,000 words, 20 mins): analogy is the core of thinking; it manifests in good problem formulation, which is most of the work of solving research problems. Bongard problems take this to the extreme: the contents of the left boxes below have something in common; so do the ones in the right boxes, except the thing in common is the opposite. What is it? So instead of typical problem solving (“given rules and a case, how to apply the rules to the case?”), find out what the rule is.

Commoditize your complement by Gwern Branwen (8,000 words, 32 mins): an alternative strategy to vertical integration where companies in a tech stack (1) dominate their layer, (2) foster so much competition in an adjacent layer that no competing monopolist can emerge (and any upstarts e.g. IG, Whatsapp can just be bought out/suppressed); this drives prices down elsewhere in the stack to marginal costs, lowering total price hence raising demand, then most of the surplus revenue gets diverted to the quasi-monopolist. In software, this is the commercial motivation behind companies pushing open-source: IBM funding Linux, Netscape open-sourcing Navigator, Sun developing Java, Microsoft buying Github, Google steadily funding oddball projects, iOS/Android vs smartphone cos. Outside of software: Uber/Grab vs self-employed drivers, voice synthesizers/musicians vs singers, restaurants vs food order apps/reservation booking cos. Commoditizing your complement is a preferable strategy to vertical integration because (1) you can stay small, lean, nimble (2) you can do it with small strategic investments in e.g. releasing IP (3) you can do it incrementally, focusing on small layers, unlike the all-at-once actions of vertical integration (4) you retain a facade of competition, avoiding anti-monopoly regulations.

A reply to Francois Chollet on intelligence explosion by Eliezer Yudkowsky (7,900 words, 31 mins): a sobering reminder that even good experts who write stuff I like can be uncharitable in assessing arguments from adjacent fields (forget the pontification of public intellectuals). I like Francois; his Deep Learning with Python textbook is great; with that and Keras he’s done more for AI than 99+% of researchers. But his essay arguing that intelligence explosion is impossible evinces no awareness of even basic AI risk arguments, just strawmen. (Satirically, Ben Garfinkel’s On the Impossibility of Supersized Machines “proves”, using similar arguments, that machines larger than humans can’t possibly exist.) In this essay, Eliezer walks through those basic arguments in response to Francois’ points: e.g. that no-free-lunch theorems are often irrelevant, that there’s an important difference between human and (say) octopus intelligence that merits the term ‘general’ even though there’s no ‘absolute’ sense in which humans are smarter, that generalized arguments against AGI need to explain why they don’t rule out humans, that intelligence is a superpower, that something having precedent doesn’t make it harmless, etc. I just wish Francois addressed these…

Mathematics, morally by Eugenia Cheng (7,000 words, 28 mins): proofs are (supposedly) the backbone of truth in math, but in practice they contribute little to a mathematician’s understanding; instead “moral” considerations take primacy, as evinced in “ought”-statements like Peter Johnstone’s remark that “constructive general topology ought to be about locales and not spaces” — just as morality is about how people should behave, math morality is about how math should ‘behave’. Proof convinces society, writes Eugenia; morality convinces us. We don’t communicate moral reasons directly because it’s harder to do so; in other words, a proof’s key property isn’t infallibility/convincingness but transferability. But the translation from moral reason to proof is tedious; the reverse translation is a slog too. If only there were a ‘system’ that’s morally complete, i.e. that’s adequate (everything that ought to be true is provable) and sound (anything that shouldn’t be true isn’t provable)… but wait, claims Eugenia, there is: category theory! This suggests that category theory could be thought of as “formalizing math morality”, so e.g. a moral reason is a categorical proof, a moral question is one that can be stated categorically (i.e. “you can’t formulate silly questions if you tried”), etc. A mathematician with a moral bent would be the opposite of a pragmatist: to prove a theorem, instead of just trying everything that comes to mind, she’ll try to think of a reason it “must be true”, then turn that reason into a proof.

Modern gospels by Mario Gabriele (4,700 words, 19 mins): religion, per Emile Durkheim, is a social phenomenon endemic to human collectives with 3 key traits: (1) a unified system of beliefs/rituals, (2) a community that protects and practices #1, (3) sacred objects (deities, tokens, tenets) made sacred by the same process that births and sustains religion: collective ritual/worship evoking groupish transcendence (“mana”), represented by the now-sacred object to sustain and emphasize it — a spiritual flywheel. Durkheim juxtaposes these with the “profane”, everything that isn’t sacred, to create a distinction between ingroup believers and outgroup infidels. Technology (e.g. social media) supercharges the spiritual flywheel by easing connection, incentivizing conflict, worsening radicalization (via echo chambers), fomenting polarization, and creating miracles (e.g. deepfakes): hence QAnon, Jobs’ Apple (but not Cook’s), bitcoin, and Tesla, plus minor half-examples like Y Combinator, wallstreetbets, Thiel-style contrarianism, and even note-taking (Roam Research). This, Mario argues, suggests that e.g. investors should back “unacknowledged religions” (although WeWork and Theranos are cautionary tales), and that founders should form cults around ideas.

The witch technical interviews (reversing, hexing, typing, rewriting, unifying) by Kyle Kingsbury (13,300 words, 53 mins): what if a witch interviewed for software engineering roles? Kyle, whose day job is designing distributed databases, also writes fantastic fiction here. Impossible to summarize, so here’s a taste:

If you want to get a job as a software witch, you’re going to have to pass a whiteboard interview. We all do them, as engineers–often as a part of our morning ritual, along with arranging a beautiful grid of xterms across the astral plane, and compulsively running ls in every nearby directory–just in case things have shifted during the night–the incorporeal equivalent of rummaging through that drawer in the back of the kitchen where we stash odd flanges, screwdrivers, and the strangely specific plastic bits: the accessories, those long-estranged black sheep of the families of our household appliances, their original purpose now forgotten, perhaps never known, but which we are bound to care for nonetheless. I’d like to walk you through a common interview question: reversing a linked list.

Selfies as a second language by Eugene Wei (1,300 words, 5 mins): there are stark generational divides in tech consumer behavior if you know where to look, driven by tech shifts: we shape our tools, then they shape us. Snapchat gives a particularly clear example, claims Eugene: send a Snap to older folks and they respond with either text or emojis; send it to young’uns and they respond with a selfie. His guess: old folks didn’t grow up with smartphones so they experience “photographic body alienation” because cameras introduce distortions (“adds ten pounds”), but young’uns don’t because they’re used to smartphone cameras, they’ve internalized the disparity between selfies and the real world like models/celebs do, and they’ve spent a lot of time mastering the selfie (e.g. A/B testing selfie poses for most likes) as a rational strategy to accumulate social capital, driven by the insight that virtual self > physical self. Since older folks get set in their ways, this means selfies will always be a “second language” to them.